At Conductrics we believe that intentional use of machine learning and contextual multi-armed bandits (MAB) often requires human interpretability. Why?

1) Compliance – in many environments it is important to insure that policies are followed about how and when different people get offered different experiences. In order to ensure compliance with those policies/regulations (for example see Art 22 of GDPR) it is often important to know exactly who will get what before using any targeting technology.

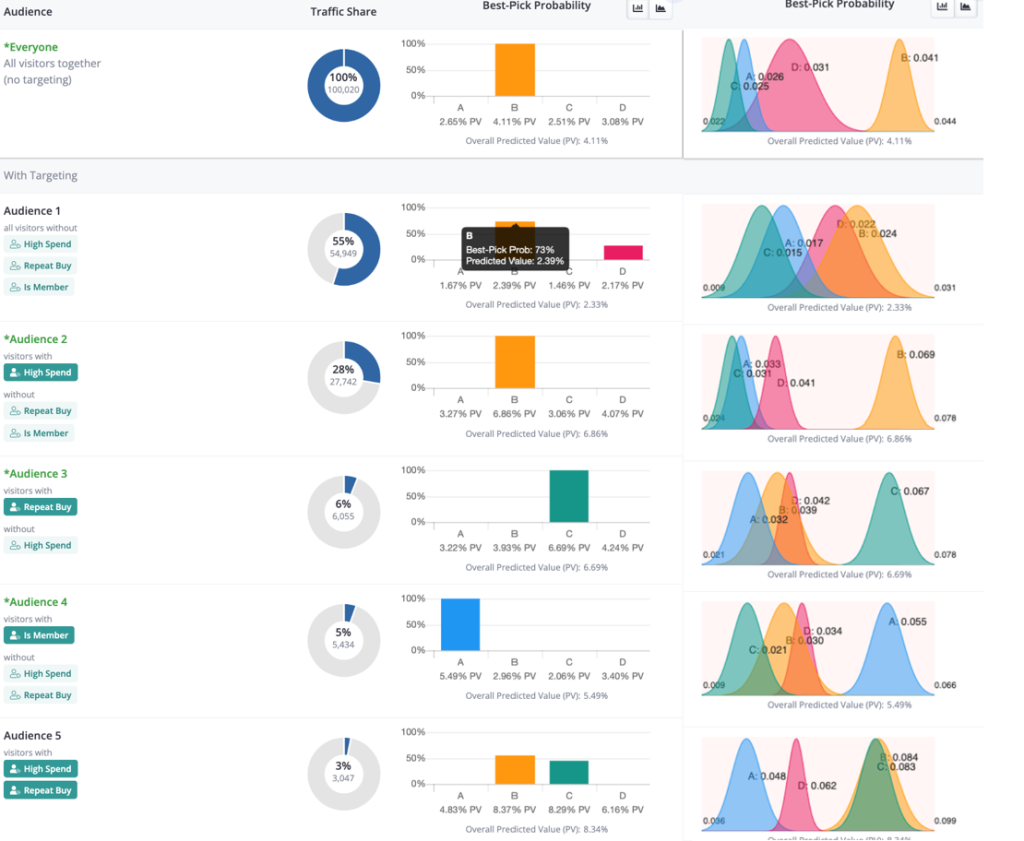

2) Understanding and Trust – Your teams are, or at least are striving to be, experts about your customers. Having interpretable results allows them to both catch any inconsistencies (“hey, something is off, there is no way there should there only be 3% of our customers who are both Repeat and High Spenders”), and glean new understandings (“oh, that is interesting, our Low Spend Repeat buyers really seem to prefer offering ‘B’. Lets maybe run a few targeted follow up A/B tests around that segment with related ideas similar to that ‘B’ offering. Better yet, lets also set up an A/B Survey to ask that segment a few clarifying questions around what their needs are to get a fuller understanding of their needs.”).

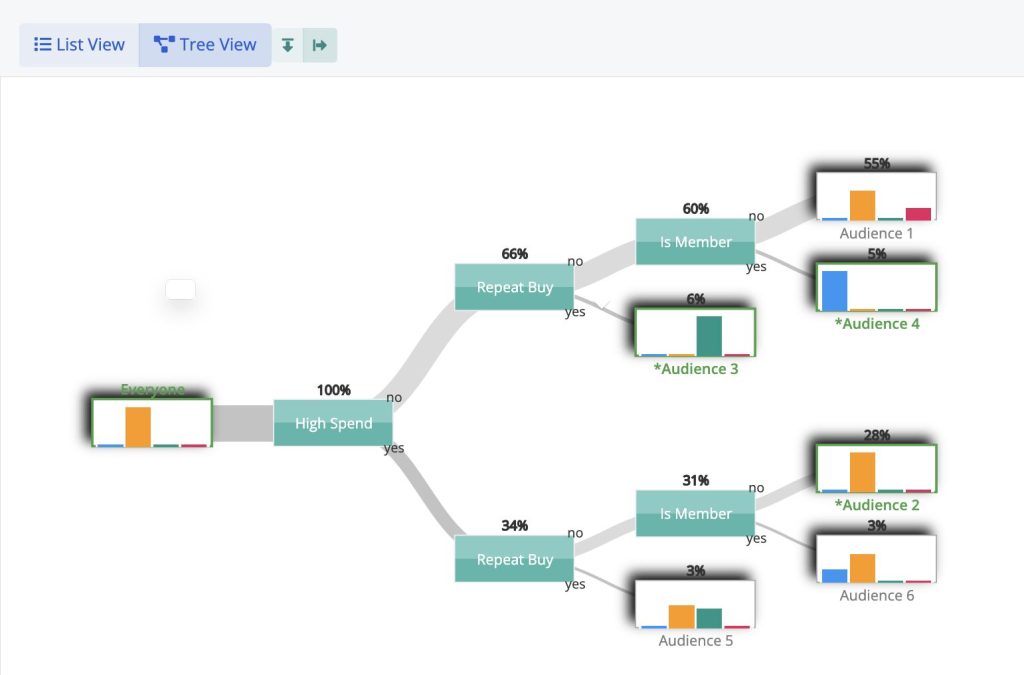

To help facilitate interpretability, Conductrics represents its learning and contextual bandit models in a tree structure that makes it very easy for teams to be able to parse the learning/bandit logic in order to see both which segment data was selected and to give a quick scan of which options each audience prefers.

Conductrics Tree View

It can also be useful to see the contextual bandit as a set of mutually exclusive rules where the teams can see the size, the conditional probabilities of each option to have the highest value, as well as a visual of the estimated underlying conditional posterior distributions that give rise to those conditional probabilities.

Conductrics Rule View

In real world applications, being productive with MAB goes beyond which algorithm to use – in fact, that often is the least important consideration. What is important is to think about the nature of your problem, the set of objectives, and then to select and use an approach that is best able to help you achieve those objectives.

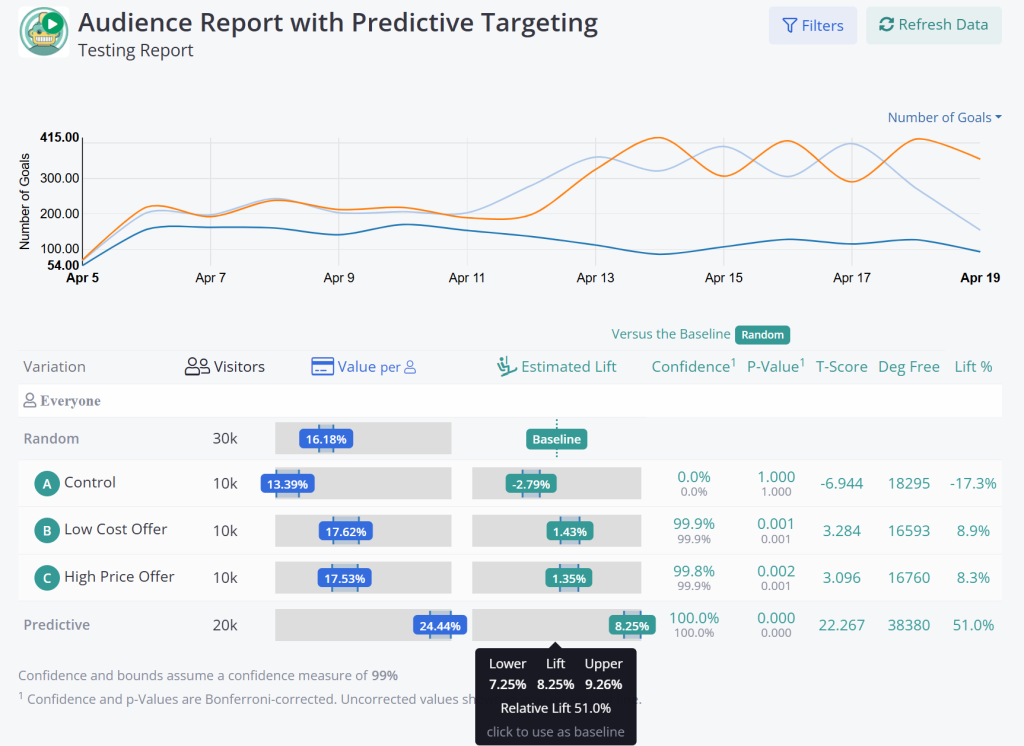

In addition, for many use cases targeting and bandit models really should be integrated into an A/B Testing structure. A/B Testing and MAB are NOT substitutes, but rather complimentary approaches. MAB when run on problems were it doesn’t matter what option is selected, or when, often will appear to be finding a ‘better’ solution then just randomly selection the options. That means that most often it makes sense to A/B test the Bandit.

A/B Testing Contextual Bandits

Remember, multi-armed bandits, simple or contextual, are just additional tools in your toolkit. Let the job guide your pick of the tool rather than tying to look for the jobs that fit the tool.